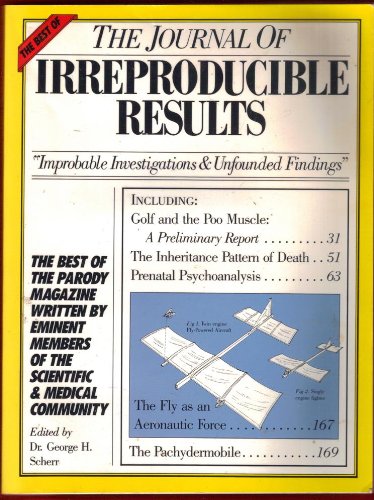

We always laughed at the papers in the “Journal of Irreproducible Results”

then we had the replication crisis and nobody laughed anymore.

And today? It seems that irreproducible research is set to reach a new height. Elizabeth Gibney discusses an arXiv paper by Sayash Kapoor and Arvind Narayanan basically saying that

reviewers do not have the time to scrutinize these models, so academia currently lacks mechanisms to root out irreproducible papers, he says. Kapoor and his co-author Arvind Narayanan created guidelines for scientists to avoid such pitfalls, including an explicit checklist to submit with each paper … The failures are not the fault of any individual researcher, he adds. Instead, a combination of hype around AI and inadequate checks and balances is to blame.

Algorithms being stuck on shortcuts that don’t always hold has been discussed here earlier . Also data leakage (good old confounding) due to proxy variables seems to be also a common issue.