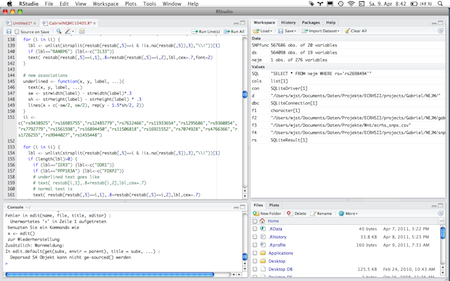

A recent article on WPA hacking using the Amzon EC2 cloud computing facility let me wonder whether there couldn’t be more useful projects. For example gene-gene interaction testing would be nice – indeed somebody has already setup a possibility to use R: Robert Grossman, director at the Informatics at the Institute for Genomics and Systems Biology. Great, many thanks!

What’s even better, a

… 60 Genomes dataset can be found here, as part of the public data that Bionimbus makes available to researchers. With the Bionimbus Community Cloud, the data is available via both the commodity Internet, as well as via high performance research networks, such as the National LambdaRail and Internet2 … If you are a member of the Bionimbus Community Cloud, then you don’t need to download the data but can compute over the data directly with Bionimbus. Currently, we are not making the Bionimbus Cloud generally available, but expect to do so beginning in approximately June, 2011.

CC-BY-NC Science Surf , accessed 07.05.2026