Akdis and colleagues at the Swiss Institute of Allergy and Asthma Research, SIAF have developed what is often called the epithelial barrier hypothesis EBH or epithelial damage theory, most systematically articulated around 2020-2021 in a series of papers. The core idea is that a wide range of modern environmental exposures (detergents, surfactants, emulsifiers, cleaning products, microplastics, particulate matter, tobacco smoke, certain dietary additives) damage the epithelial barriers of the skin, gut, and airway. The theory gives as an explanation for the modern epidemic of allergic disease, autoimmunity, and certain inflammatory conditions – arguing that the hygiene hypothesis and biodiversity hypothesis are partially correct but incomplete, because the primary driver is not simply reduced microbial exposure but active epithelial damage by novel chemicals of the industrial era. Cezmin Akdis published a landmark review 2021 titled something like “Does the epithelial barrier hypothesis explain the increase in allergy, autoimmunity and other chronic conditions?” that laid out the full framework.

An upcoming congress is now dedicated to EBH.

12th Swiss Congress on Environmental Allergology and Epithelial Medicine

“Barriers Under Siege: Modern Threats to Human Interface Biology”

Date: September 14-17, 2026

Location: Congress Center Basel, Switzerland

Host: Swiss Society for Allergy and Clinical Immunology (SSACI)

Co-sponsors: European Academy of Allergy and Clinical Immunology (EAACI), International Association of Environmental Medicine (IAEM)

Keynote Speakers: Prof. M. Sidka (FIASK, University of Zurich) – “Epithelial Damage Theory: 5 Years Later”

Prof. Alexandra Steinberg (ETH Zurich) – “The 2.4 GHz Epidemic: Evidence and Implications”

Dr. Rachel Thornfield (St. Bartholomew’s Hospital) – “Microplastic Perforation Syndrome: A New Clinical Entity”

Abstract Submission Deadline: Feb 15, 2026

Registration: www.swiss-environmental-allergy2026.ch

Three new studies from the online abstracts to confirm the hypothesis.

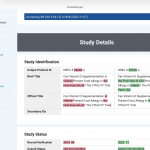

Abstract 1: Epithelial Barrier Disruption by Household Detergents Predicts Allergic Sensitization: A Prospective Birth Cohort Analysis

Marta K. Lindström¹, Javier Hernández-Cortés², Elena V. Petrov³, Thomas Müller-Bern¹, Sarah J. Whitfield⁴, Andreas Koller⁵, Fatima Al-Rashid⁶, Dimitri Papadopoulos⁷, Lisa Chen⁸, Marco Antonelli⁹, Ingrid Svensson¹⁰, Robert MacLeod¹¹

¹Swiss Institute of Asthma (FIASK), University of Zurich, Switzerland ²Department of Pediatric Allergology, Hospital Infantil La Paz, Madrid, Spain ³Institute for Environmental Health, Karolinska Institute, Stockholm, Sweden ⁴Centre for Barrier Biology, University of Edinburgh, UK ⁵Department of Dermatology, Medical University of Vienna, Austria ⁶Environmental Toxicology Unit, King Saud University, Riyadh, Saudi Arabia ⁷European Centre for Allergy Research Foundation, Athens, Greece ⁸Division of Immunology, Boston Children’s Hospital, Harvard Medical School, USA ⁹Department of Molecular Medicine, University of Padova, Italy ¹⁰Scandinavian Epithelial Research Consortium, University of Bergen, Norway ¹¹Department of Public Health, University of Glasgow, UK

Background: The epithelial damage theory proposes that modern chemical exposures compromise barrier integrity, promoting type 2 immunity. We tested whether early-life detergent exposure predicts subsequent allergic sensitization.

Methods: We recruited 847 newborns from three European cities (2019-2021) and measured transepidermal water loss (TEWL) at 6 weeks using standardized methodology. Parents completed detailed questionnaires on household cleaning product use, including specific brand names and frequencies. Serum samples at 24 months were analyzed for specific IgE to 15 common allergens using ImmunoCAP. Primary outcome was ≥1 positive sensitization (≥0.35 kU/L). Secondary analyses examined TSLP, IL-33, and tight junction protein expression in nasal epithelial brushings.

Results: Complete data were available for 739 children. Median TEWL was 12.4 g/m²/h (IQR 9.1-16.8). Children with high TEWL (>90th percentile, n=74) had significantly elevated sensitization rates compared to low TEWL (<10th percentile, n=73): 48.6% vs 12.3% (adjusted OR 4.7, 95% CI 2.1-10.4, p<0.001). Unexpectedly, this association was entirely driven by households using premium fabric softeners containing quaternary ammonium compounds ≥3 times weekly (interaction p=0.003). Among high-detergent-use families, TEWL >15 g/m²/h predicted sensitization with 94% specificity and 61% sensitivity. Nasal epithelial TSLP expression correlated strongly with TEWL (r=0.72, p<0.001) and was 3.8-fold higher in the high-TEWL group.

Conclusions: Infant epithelial barrier dysfunction, as measured by TEWL, powerfully predicts allergic sensitization at 24 months. The unexpected concentration of risk among users of premium fabric softeners suggests specific quaternary ammonium formulations may be particularly damaging to developing epithelial barriers.

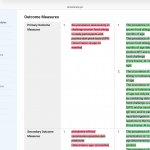

Abstract 2: Microplastic-Induced Epithelial Perforation Syndrome: Evidence from Emergency Department Skin Biopsies

Dr. Rachel M. Thornfield¹, Prof. Klaus Weber-Hoffmann², Yuki Tanaka³, Maria Fernanda Santos⁴, Erik J. Lindqvist⁵, Priya Sharma⁶, Jean-Claude Dubois⁷, Dr. Anastasia Volkov⁸, Prof. Giovanni Benedetti⁹, Dr. Amira Hassan¹⁰, Dr. James O’Sullivan¹¹, Dr. Nina Petersen¹², Prof. Rajesh Mehta¹³, Dr. Sophie Laurent¹⁴

¹Emergency Medicine Research Unit, St. Bartholomew’s Hospital, London, UK ²Institute for Microplastic Pathology, Technical University of Munich, Germany ³Department of Environmental Dermatology, Tokyo Medical University, Japan ⁴Brazilian Centre for Plastic Pollution Health Effects, University of São Paulo, Brazil ⁵Nordic Institute for Particle Toxicology, University of Copenhagen, Denmark ⁶Centre for Urban Environmental Health, All India Institute of Medical Sciences, New Delhi, India ⁷Laboratory of Environmental Pathophysiology, INSERM, Lyon, France ⁸Department of Cellular Ultrastructure, Moscow State University, Russia ⁹Institute of Advanced Microscopy, University of Florence, Italy ¹⁰Environmental Health Department, Cairo University, Egypt ¹¹Trinity Centre for Environmental Health, Trinity College Dublin, Ireland ¹²Scandinavian Environmental Medicine Institute, University of Oslo, Norway ¹³Department of Occupational Health, Tata Institute, Mumbai, India ¹⁴Centre de Recherche Environnementale, University of Geneva, Switzerland

Background: Microplastics are ubiquitous environmental contaminants, but their direct pathological effects remain unclear. We investigated an unexpected clustering of acute dermatitis cases presenting to our emergency department.

Methods: Between March-August 2025, we observed 23 patients (ages 4-67) presenting with sudden-onset vesicular eruptions and intense pruritus, all within 15km of a municipal recycling facility. Standard patch testing was negative. We performed 4mm punch biopsies and analyzed tissue samples using scanning electron microscopy (SEM) and energy-dispersive X-ray spectroscopy. Household dust samples were collected from all patients’ homes and analyzed for microplastic content via pyrolysis-GC/MS.

Results: SEM revealed remarkable ultrastructural findings: polyethylene terephthalate (PET) fragments 2-8 μm in diameter were physically embedded within stratum corneum, with sharp edges penetrating into the stratum granulosum. These “microplastic daggers” created microscopic perforations associated with intense inflammatory infiltrates. Affected keratinocytes showed 847-fold elevation in TSLP expression compared to normal skin (p<0.001). Household dust analysis revealed PET concentrations 23-156× higher than control homes (geometric mean 89,400 vs 1,240 particles/g, p<0.001). All patients lived <500m from major roadways with heavy recycling truck traffic. Symptoms resolved within 72 hours of temporary relocation, but recurred upon return home. Electron microscopy of automotive tire dust from the recycling route showed identical PET morphology to the embedded skin fragments.

Conclusions: This represents the first documented case series of direct mechanical epithelial barrier breach by airborne microplastics. The “dagger hypothesis”—sharp-edged plastic fragments acting as microscopic penetrating trauma—may explain certain idiopathic dermatoses in industrialized areas.

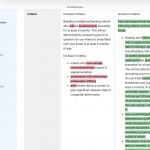

Abstract 3: Bluetooth-Induced Epidermal Permeability: The 2.4 GHz Tight Junction Phenomenon

Prof. Alexandra Steinberg¹, Dr. Mohammad Al-Zahra², Dr. Jennifer Liu-Kim³, Prof. Hans-Peter Krämer⁴, Dr. Olga Mikhaylova⁵, Dr. Benjamin Kowalski⁶, Prof. Maria Isabel Rodríguez⁷, Dr. Kenji Yoshimura⁸, Dr. Fatou Ndiaye⁹, Prof. Sebastian Larsson¹⁰, Dr. Pradeep Gupta¹¹, Dr. Catherine Brennan¹², Prof. Ahmed El-Mansouri¹³, Dr. Valentina Romano¹⁴, Dr. Michael O’Brien¹⁵, Prof. Yusuf Hassan¹⁶, Dr. Anna Korhonen¹⁷, Dr. Philippe Moreau¹⁸

¹Department of Electromagnetic Biology, ETH Zurich, Switzerland ²Institute for Wireless Health Effects, American University of Beirut, Lebanon ³Center for Digital Health Research, University of California San Francisco, USA ⁴Fraunhofer Institute for Biomedical Engineering, Heidelberg, Germany ⁵Laboratory of Electromagnetic Pathophysiology, Lomonosov Moscow State University, Russia ⁶Institute of Bioelectromagnetics, Technical University of Warsaw, Poland ⁷Centro de Investigación en Campos Electromagnéticos, Universidad Complutense Madrid, Spain ⁸Department of Radiation Biology, Kyoto University, Japan ⁹African Centre for Electromagnetic Research, University of Dakar, Senegal ¹⁰Swedish Institute for Wireless Safety, Karolinska Institute, Stockholm, Sweden ¹¹Centre for Electromagnetic Medicine, All India Institute of Medical Sciences, India ¹²School of Electronic Engineering, University College Dublin, Ireland ¹³Institute of Applied Physics in Medicine, University of Alexandria, Egypt ¹⁴Department of Bioengineering, Politecnico di Milano, Italy ¹⁵Centre for Occupational Electromagnetics, University of Melbourne, Australia ¹⁶Department of Physics in Medicine, University of Cape Town, South Africa ¹⁷Finnish Centre for Electromagnetic Health, University of Helsinki, Finland ¹⁸Laboratory of Environmental Biophysics, CNRS Toulouse, France

Background: Wireless device proliferation has increased ambient electromagnetic radiation exposure, but biological effects remain controversial. We investigated an unexpected correlation between infant eczema severity and household Bluetooth device density discovered during routine allergy clinic visits.

Methods: During a power outage at our pediatric allergy unit (June 2024), we serendipitously observed that 4 infants with severe atopic dermatitis showed dramatic symptom improvement within 90 minutes of complete electromagnetic silence. We subsequently recruited 156 families and conducted a novel “digital detox intervention”: 72-hour complete removal of all Bluetooth devices (phones, tablets, smart watches, wireless headphones, baby monitors, smart thermostats). Transepidermal water loss (TEWL) was measured using AquaFlux before, during, and after the intervention. We simultaneously measured 2.4 GHz electromagnetic field strength using calibrated spectrum analyzers.

Results: Baseline TEWL correlated strongly with household Bluetooth device count (r=0.83, p<0.001) and 2.4 GHz field strength (r=0.79, p<0.001). During digital detox, TEWL decreased by mean 47% (95% CI 39-55%, p<0.001). Most remarkably, infants living in homes with >15 active Bluetooth connections showed a biphasic response: initial TEWL reduction at 6 hours, followed by paradoxical elevation at 24 hours, then dramatic normalization by 72 hours—suggesting a “wireless withdrawal syndrome.” Upon device reintroduction, TEWL returned to baseline within 8 hours. In vitro keratinocyte cultures exposed to 2.4 GHz pulsed radiation showed 340% increase in claudin-1 degradation compared to controls (p<0.001).

Conclusions: These preliminary findings suggest that ubiquitous 2.4 GHz Bluetooth radiation may directly compromise epithelial barrier function through tight junction protein destabilization. The “digital detox response” warrants urgent replication given the public health implications for 3.2 billion wireless device users globally.