For most researchers it takes a long time to develop ideas, run experiments, do the analysis and write up the results to the standard that journals expect. By the time that you get the reviews back for a piece of work it is likely that you are coming towards the end of your funding, or even that your funding has long since run out. If a reviewer points out a likely problem and the author recognises it as such, they are often left with the thought that they don’t have the time to go back to the drawing board. Developing a better idea can happen the next day, but it could also require several months of intense work and those months may not be available.

As a researcher you are not only emotionally invested in your hypothesis (with all the inadvertent biases you may then apply to your study) but you are literally invested with a lot of your time and money.

I wonder if the state of science publishing could be vastly improved if we started with something similar to what physicists do and expand further.

‘Physics’ has theoretical physicists who develop hypotheses, and experimental physicists then design experiments to test those hypotheses.

This could be taken a step further, in all scientific fields, for example with a further division in responsibility.

https://www.science.org/doi/epdf/10.1126/science.adk1852

Honest mistakes happen, and journals need to be accessible and on the record about their behaviors. Issuing carefully worded statements and “no comment” has no place in a generative culture. Mean-while, although there have been good recent discussions about universities and journals working together to accelerate corrections and retractions, the universities need to realize thatt hreats of litigation may not be the major consideration when so many within and outside the scientific community are losing trust in science.

https://www.science.org/doi/epdf/10.1126/science.adw5838

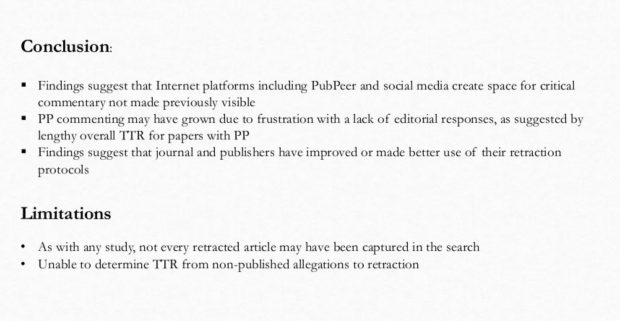

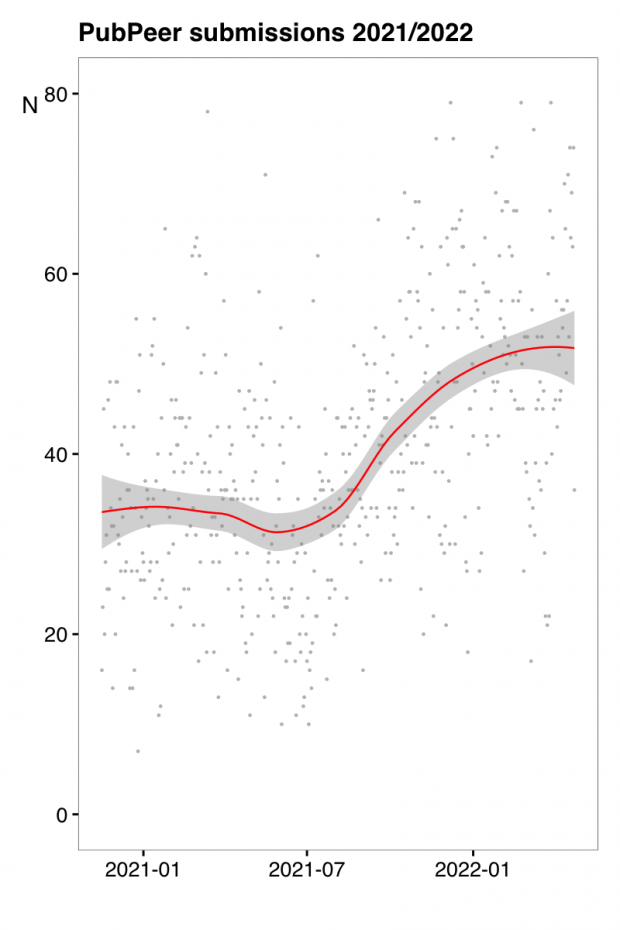

Media and public interest in research integrity cases – spurred by online platforms likeX, Bluesky, and PubPeer that give a front row seat to potential disputes in real time – is increasing …A university is likely to opt for silence because of fear of litigation and damage to the institution’s reputation. However ,authors should ask themselves whether silence could be interpreted by the media and public as an admission of guilt. So, in addition to consulting with institutional professionals, authors should think about talking to the media directly. This can be an opportunity to provide the unvarnished truth in response to tough questions.